Fast, Low-Cost & Green

Taraxa’s unique t-Graph consensus uses blockDAG, anchor chain, and an asynchronous PBFT to scale blockchain technology without sacrificing security or decentralization.

EVM-Compatible Network

Taraxa is EVM-compatible, deploy your Ethereum dApps with just a few clicks!

Low-Cost Transactions

Send transactions for the cost of… next to nothing.

No Network Congestion

5k TPS, near-instant transaction inclusion, sub-second blocktime and 3.7 second True Finality.

True Decentralization

Truely permissionless network where anyone can be a validator – it even runs on a Raspberry Pi!

AI-Enabled Web3 Ecosystem

AI + Blockchain is the future with Taraxa’s ecosystem paving the way.

trendSpotter

Stay ahead of the game by discovering trends before they're trending. (coming soon)

Stake on Taraxa

Earn rewards and help secure the network by staking your TARA.

Total Stake

2,109 million TARA

Staking Yield

~17.6 %

Validator Commission

~2.0 %

Staking Ratio

54.0 %

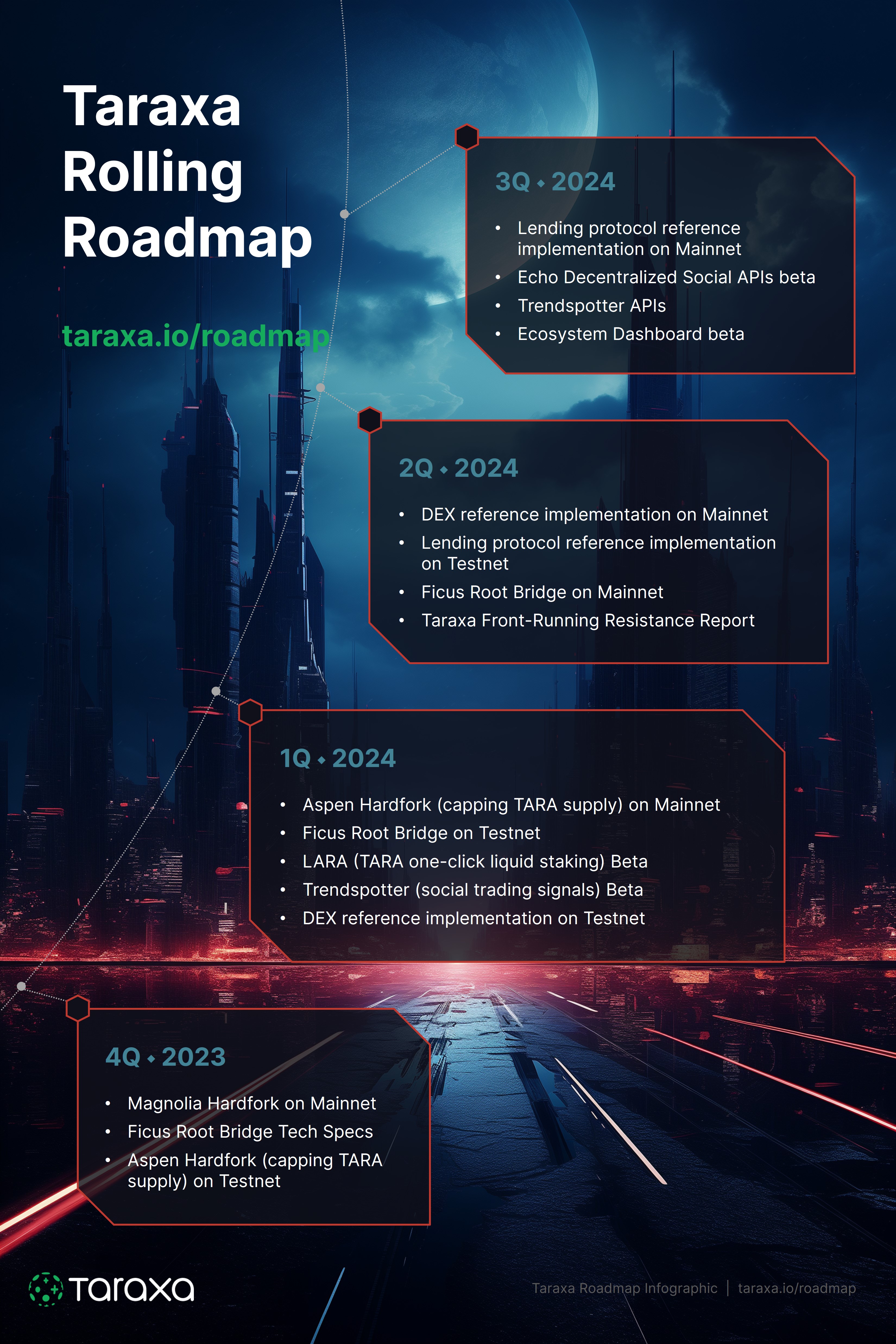

Rolling Development Roadmap

Development will focus on driving adoption of the Taraxa ecosystem.

Learn More

Dig deeper into the Taraxa ecosystem!